This four-part blog series will discuss the dominant role that hyperscale plays in today’s state-of-the-art cloud computing and related data center infrastructure environment. It will present various aspects of the hyperscale ecosystem, including definitions, market size, challenges, solutions, etc. It will conclude with DP Facilities’ unique value-add in the hyperscale space with respect to the enterprise.

What is Hyperscale?

In the IT world, scaling computer architecture typically entails increasing one or more of these hardware resources—computing power, memory, networking infrastructure, or storage capacity.

However, with the arrival of Big Data and cloud computing, the ability to rapidly scale hardware resources of the underlying data center(s) in order to respond to increasing demand, becomes a challenge of paramount importance.

Hyperscale is not simply the ability to scale, but the ability to scale rapidly and in huge multiples as mandated by demand. Thus, hyperscale architecture design had to replace traditional high-grade computing elements such as blade servers, storage networks, network switches, network control hardware, obsolete power supply systems, etc., for a stripped-down, cost-effective infrastructure design that supported converged networking, software-based control elements of aforementioned hardware resources, and one which deployed a base level of virtual machines.

In the IT domain, there is a vertical or “scaling up” approach to computing architecture, which typically involves adding more power to existing machines or more capacity to existing storage. However, in hyperscale architecture, there is a “scaling out” or horizontal scalability, which means adding more virtual machines to a data center’s infrastructure for high throughput, or remotely increasing redundant capacity for high availability and fault tolerance. Thus, hyperscaling is not only done at a physical level with a data center’s infrastructure and distribution systems, but also at a logical level with computing tasks and network performance.

What is a Hyperscale Data Center (HDC)?

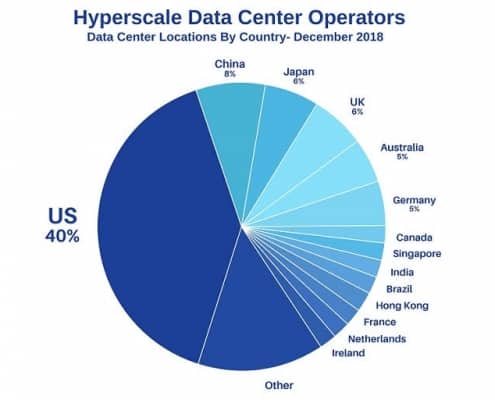

A Hyperscale Data Center is a data center that meets the hyperscale requirements defined above. More importantly, an HDC is infinitely more complex and sophisticated than your traditional data center. First and foremost, an HDC is a significantly larger facility than a typical enterprise data center. Per International Data Corporation (IDC), a market intelligence firm, a data center qualifies as Hyperscale when it exceeds 5,000 servers and 10,000 square feet. But this is only a starting point, as we know that the largest cloud providers (Amazon, Google and Microsoft) house hundreds of thousands of servers in HDCs around the world.

Again, per IDC, HDCs require architecture that allows for a homogenous scale-out of greenfield applications. Not only must an HDC enable personalization of nearly every aspect of its computing and configuration environment, but also it must focus on automating or “self-healing.” This term implies that an HDC recognizes that inevitably some breaks and delays occur, but its environment is so well controlled, that it will automatically adjust and correct itself.

In Part II of this blog series, we will begin with a definition of the HDC market size and then go on to explain the primary accomplishments of the HDC in terms of sustainability — from a power, cooling, availability and load balancing standpoint.